[The red text is quoted directly from the Consultation on how GCSE, AS and A level grades should be

awarded in summer 2021 produced by Ofqual.]

Here we are again. In

spring and summer 2020 we spent time preparing for and implementing the system

that was created in the place of exams. Without

training or support, teachers were required to rank students in each subject. This was difficult and time-consuming. It only worked as well as it did because in

many schools teaching wasn’t being done in the usual way and in the second half

of the summer term exam leave would have been in place for Yr 11 and Yr 13

students anyway, so many teachers had gained-time they could use to develop the

rank order and the CAGs.

So – to this year, and a new system to get to grips with.

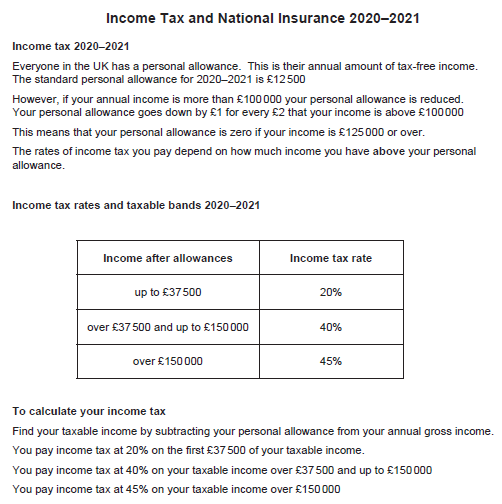

Not only is the system itself going to be different. The consultation tells us Grades would only change, in this process, as a result of

human intervention. This is code

for “no algorithms will be used in the making of these exam results”.

Unfortunately, everything else is different too. Now schools are required to have full

teaching in place (having been explicitly threatened over this by the Secretary

of State). Then we are likely to have exam

year groups in school for longer because instead of pupils sitting externally-marked

exams in early June, teachers will mark the exams that are sat in early June

and will use this, along with other evidence, to create a grade for their

students. Next, To

help teachers make objective decisions we propose that exam boards should provide

guidance and training. Hold on:

at a time when workload is greater than it ever has been, when there will be

less gained-time in the summer term, on top of this teachers will need to

undergo training to do something we have never done before.

The exam boards haven’t yet written that training (the

system hasn’t yet been decided on) – for something where the deadline for teachers

to submit the grades is 5 months away! 5

months: to finish the consultation, to publish the response, to create a system,

to write training, to roll the training out to teachers, for teachers to deploy

it with the students and to gather data and to put that together to create the

final grade. Really?

Last summer a system was put in place at short notice. How did that one turn out?

System-wide change is hard.

It’s not easy to get it right at all, it’s hard to get it right first

time and it’s even harder to get it right first time at speed. Add to which the idea that it’s not just a

new system for, say, providing vaccines, where it doesn’t particularly matter

if 70-year-olds in one part of the country are inoculated a few weeks before

those in a different area. For the

students’ exam results we need to ensure this is fair across every school in

the country. During a pandemic. With teachers who have even less time than

usual.

I am not objecting to making changes to assessment; if the

government wants to do things differently and properly consider the

implications, to have trials of alternative methods, etc, then that is fine …

for exams in 2024 at the earliest. My

objection is the speed with which this will need to be done: the clock is

ticking. By the time the consultation closes

there will be 4 ½ months before results need to be submitted. My objection is to the additional pressure

this will put on teachers, heads of department and senior leaders, especially

head teachers. My objection is that students

will be anxious, unsure of what they need to do, upset and, ultimately, not

served well by a rushed system.

What to do instead? Don’t

cancel exams. It’s that easy.

There are legitimate concerns about equity and access to

education for every student. In this

scenario we could allow for special consideration, take note of who hasn’t had

access to wifi, computers, give additional credit to those whose schools were

closed earlier, who had to self-isolate, etc.

The huge resources that will be put into creating a new system for use

this summer, could instead be diverted to provide support for pupils who need

it.

Everything in the consultation revolves around exams being

cancelled and in trying to make that work.

By ignoring whether that cancellation is the right decision we are being

asked to comment on the wrong thing.

The consultation itself isn’t going to give sensible

results. How many people will answer the

consultation without reading the full documentation? Lots.

Question: 1. To what extent do you

agree or disagree that the grades awarded to students in 2021 should reflect

the standard at which they are performing?

Surely no-one can disagree with this? It’s makes sense, doesn’t it?

If you read the consultation text you see that this is being

contrasted with the system from 2020 where students were awarded the grade

their teachers thought they would have got.

The question should include this information.

What does the standard at which they

are performing mean? Well, later

the consultation says: Teachers should assess students

on the areas of content they have covered and can demonstrate their ability,

while ensuring sufficient breadth of content coverage so as not to limit

progression.

It appears that they are not expecting students to cover the

whole of the curriculum in a subject, but that they will be assessed on the

parts they have studied. How much is sufficient breadth of content coverage? That doesn’t seem clear.

In the absence of exams, our view is

that teachers, once provided with the necessary guidance and training, are best

placed to assess the evidence of the standard at which their student is

performing.

Exams don’t have to be ‘absent’. No, they really don’t. And while teachers _do_ know their pupils

well and do know how well they are performing, they don’t know “the standard”, because

we don’t standardise things. Not across

schools. Not any more.

(We used to, but someone decided that national curriculum levels

of attainment that were the same across the whole of England should be

abolished and that it made far more sense for every school to reinvent the

wheel. Now we have a fragmentary system

that means we can’t compare across schools.)

Now I will go through sections of the consultation.

2 What the grades will mean

Remember: the intention is that students will be assessed on

the parts of the content they have studied.

Qualification grades indicate what a person who

holds the qualification knows, understands and can do, and to what standard. This won’t happen if each student is

graded only on part of the course.

For qualification grades to be

meaningful, a person who holds a qualification with a higher grade must have

shown that their knowledge, understanding or skills are at a higher standard

than a person who holds a qualification with a lower grade. This won’t work if different students

have been assessed in different ways.

We do not believe that teachers

should be asked to decide the grade a student might have achieved had the

pandemic not occurred. That would put them in an impossible position, as they

would be required to imagine a situation that had not happened. It would also

mean that those who use the grades would not know whether the grade indicated

what a student knew, understood or could do or, rather, what they might have

known, understood or could have done, had things been different.

The teachers who worked so hard in summer 2020 to create

CAGs, and the students who received results in 2020 will be delighted to hear

this!

Teachers should assess students on

the areas of content they have covered and can demonstrate their ability, while

ensuring sufficient breadth of content coverage so as not to limit progression.

Rather than asking each school to create their own,

different system for doing this, perhaps we could have a national method? Such as an exam?

3 When teachers should assess the

standard at which students are performing

Here are some of the questions in this section:

Question 2. To what extent do you

agree or disagree that the alternative approach to awarding grades in summer

2021 should seek to encourage students to continue to engage with their

education for the remainder of the academic year?

This assumes there should be an ‘alternative approach’. There shouldn’t. The consultation should be asking about whether

exams can take place and how, not assuming that they can’t.

How many people are likely to say “no” to the idea that

students should continue their education during this academic year? What will you learn from the answer to this

one?

Question 3. When would you prefer

that teachers make their final assessment of their students’ performance?

I would prefer that teachers _didn’t_ make the final

assessment.

For these reasons, we propose that

teachers should make final assessments of the standard of their students’

performance during late May and early June. If the assessments were undertaken

earlier, perhaps in April, then students would unnecessarily miss out on more

of their education. If they were assessed later, perhaps in July, this would

delay the release of results, as there would be insufficient time for teachers

to assess their students and for the necessary internal and external quality

assurance measures to be taken. This could in turn delay students’ progression

at the start of the next academic year.

We have no way of knowing how long the quality assurance

process will take, so we have to take Ofqual at their word on this. This is therefore an unanswerable question –

it is clearly daft to have them too early or too late. Again: what will be learned from this question?

4. To what extent do you agree or

disagree that teachers should be able to use evidence of the standard of a

student’s performance from throughout their course?

“Should be able to use”.

But how?

This feels like an obviously fair thing to do. “If my student has some good evidence from

earlier in the course then I should be able to use it” seems reasonable. But how do we ensure a level playing

field? If mock exams happened in

December for Yr 11 in some schools but were planned for January in others then

this doesn’t give everyone the same opportunity.

You might say that I am just answering the question here,

but it’s more than that: we don’t have the detail to know how this will be done

– so any answers will be meaningless.

4 How teachers should determine

the grades they submit to exam boards

We propose that teachers should only

take evidence-based decisions about the grade they recommend their students be

issued. A breadth of evidence should inform a teacher’s assessment of their

student’s deserved grade.

This is where the training for teachers comes in. The training that will need to be devised and

delivered by exam boards from scratch and done and dusted in only a few months.

If this is ever going to happen it should be brought in with

a lead-in time of several years: enough time for teachers properly to

understand what it looks like and what sorts of things will count. Enough time for students to get used to

working in ways that will showcase their abilities fairly. Tacking this on in a hurry now means it will

be hugely uneven.

Who will it harm the most?

The already-disadvantaged. If it’s

the case that exams have been cancelled because disadvantaged pupils will

suffer … well we need to put things in place to support them, not spend vast amounts

of time and energy creating a new system that will still disadvantage them.

Having said that exams would be cancelled, the next part

says: 4.1 The use of exam board papers

I challenge anyone to read that without smiling. Exams have been cancelled, but instead students

will sit ‘exam board papers’!

We propose that teachers should

assess their students objectively. To support them we propose the exam boards

could provide guidance and training, along with papers which teachers could use

to assess their students. The exam boards might work jointly on the guidance

and training where appropriate. The consultation seeks views on the role of

these papers in informing a teacher’s assessment of a student’s grade.

Provision of papers by exam boards would support consistency within and between

schools and colleges.

As would exams. As

would exams. As would actual exams!

The teacher, through the marking of

the papers, could consider the evidence of the student’s work and use that to

inform their assessment of the grade deserved. The exam boards could also

sample teachers’ marking as part of the external quality assurance arrangements

and to seek to ensure this was comparable across different types of school and

college, wherever students are studying.

What? So presumably a

higher mark in the paper would suggest, I don’t know, a higher grade in the subject? To ensure real consistency, why not get

external people to mark them. They could

be trained to do this and could mark lots of papers. We could call them “examiners” and they could

be paid for doing it. And we would have returned

to the system of having actual exams.

The use of exam board papers could

also help with appeals. We propose that the exam boards should use in their

papers, questions that are similar in style and format to those in normal exam

papers.

Read that again. The questions

will be similar in style and format to those in normal

exam papers. So they are exam

papers then.

This means that the sorts of

questions used will be familiar to students, who typically use past papers to

help them prepare for their exams.

Like exams then.

The exam boards might use a

combination of questions from past papers and new questions to develop their

papers.

No no no no no. Not past paper questions. Don’t even

suggest this. Just don’t. All teachers are familiar with the student

who gets 90% on a mock exam but subsequently gets 30% in the real thing, because

they had done the past paper with a tutor, or an older sibling, or had found

the answers on The Student Room, etc, before the mock. In this scenario those students who happen to

have done a particular past paper (as a mock exam or a practice paper) will be

advantaged. That’s not right.

The nature of the papers set by the

exam boards will need to be appropriate for the subject. Students must be given

opportunities to show what they can do. For example, a student who was working

towards a high grade in GCSE mathematics must be given the opportunity to show

they could perform to a standard associated with that grade. Similarly, a student

who is working at a lower grade standard must have access to material which

reflects that fact.

Um – exam papers generally try to do this.

We propose that the set of papers

provided by the exam boards should cover a reasonable proportion of the content

and that teachers should also have some choice of the topics on which their

students could answer questions. The set of papers could allow teachers the

ability to choose from a set of shorter papers, based on topics, to allow

teachers options to take account of content that has not been fully taught due

to the disruption.

We need more information about what this means. Will the teachers be given a list of topics that

are covered in each question and told to select from those (“I’ll have a

Pythagoras, a simultaneous equations and a ratio question, please”), or will

they see the actual questions (“That’s a hard Pythagoras question – I think I

prefer this one instead”)?

It is important to note that the exam boards don’t know for

certain how easy or hard students will find each question they set. This is shown by the way grade boundaries are

usually determined only _after_ the papers have been marked and data collated

as to how each question performed. In

the maths world, a few years ago one of the exam boards tried to be helpful and

gave estimated grade boundaries for a specimen paper for which they had no

data. After lots of concerned TES Forum

posts from very anxious teachers they withdrew the boundaries – and haven’t

issued such things since. Given that the

experts don’t find this easy to do, how can we expect teachers to sort out appropriate

grade boundaries, particularly given that the data about how hard candidates

find each question won’t be collected by exam boards this year?

If the topics covered by the

teacher’s assessment are too narrow, students will have less opportunity to

show the standard to which they can perform. They would also not have demonstrated

the breadth of knowledge they hold, which risks halting their ability to

progress successfully with further study. We are seeking feedback on the

minimum breadth of subject content a teacher must assess a student on. This

makes it appear as if the consultation is going to ask “how much” content must

be assessed. It doesn’t. The closest to this is Q12: To what extent do you agree or disagree that teachers should

be required to assess (either by use of the exam board papers or via other

evidence) a certain minimum proportion of the overall subject content, for each

subject? This seems to me to be

asking whether a minimum proportion should be tested, not what that

minimum should be.

The exact approach would have to be

tailored for each subject with details confirmed by the exam boards following

this consultation. We propose that the exam boards should provide guidance on

how teachers should take account of other evidence of the standard at which the

student was working and of factors that might have affected their performance

in the papers. In all cases we propose that teachers should record the evidence

on which they base their decision for each student. This will be essential if

students choose to appeal. It will also be needed by the exam boards for

quality assurance arrangements.

_If_ other evidence is going to be used then it should be

recorded. How do we ensure this is done

fairly? How do we ensure every student

has the opportunity to provide additional information? How do we ensure this is manageable for teachers? Teachers won’t want their students to be

disadvantaged, but if a teacher currently has one exam class they will have a

vastly different workload in this process from one that has several exam

classes. Those who teach in a sixth form

college, for example, are likely to have around half of their students requiring

grading and additional information. And remember,

this is an unfamiliar process that will require training, will require the

marking of exam papers and is to be done without additional time being made available.

4.3 Other performance evidence

We propose that teachers should be

able to take other evidence of a student’s performance into account when

deciding on the grade to be submitted to the exam board. If teachers do not use

the exam board set papers, or even where they do, they should use additional

ways to assess students and to gather evidence of the standard at which their

students are performing. The exam boards would provide guidance on how they

could do this. Gathering more

evidence. In what time-frame? There won’t be time for exam boards to decide

what is required/permitted, for them to prepare the training, to train teachers

and then for teachers to tell students what they could do to impact this, so it

can only be things that exist already, which will advantage those who already happen

to have done the things that are deemed to be appropriate.

We propose that where teachers

devise their own assessment materials, they should be comparable in demand to

the papers provided by the exam boards.

Why would anyone do this?

Why would anyone want this to happen?

There is so much evidence to show that writing exam papers is hard. It is reasonable for teachers to write end-of-topic

tests, and to create formative tests that will show which children can answer questions

on a particular topic. But writing real,

proper exam papers is hard. It’s done by

highly trained and experienced professionals.

And even they (as Twitter shows every now and then) occasionally get it

wrong.

Writing a single question without flaws is hard. Creating an exam paper that provides a good

mix of questions and difficulties is a different level. Teachers just aren’t used to doing this. Plus there’s the chance that pupils will get

used to the style of questions their teacher poses … and will therefore answer

a ‘home-made’ paper better. Plus the

teachers will know the questions that their students are going to get which doesn’t

seem ideal. Why would this be worth

doing? Only if teachers in a school were

convinced it would benefit their students (perhaps, legitimately, by allowing

the students to demonstrate better what they can do). So if this is an advantage to the students,

which teachers and schools will have the capacity/skills/experience to make it

happen? And who will suffer as a result?

Any assessment must allow students

to demonstrate the standard at which they can perform. We propose that any

teacher devised assessments used to support the final assessment should be used

at the same time as the exam board papers would be taken, to avoid any students

being unfairly advantaged or disadvantaged by the timing of when they are

assessed.

A legitimate use of teacher-devised papers seems to me to be

to allow for additional information to be gathered ahead of the exam

period, to go alongside the exam papers created by the boards. But that isn’t being permitted.

Teacher devised assessments should

be supported by mark schemes, in order to support consistent marking within a

school or college and any appeals. We propose that the exam boards should

provide guidance for each subject on the relative use of different forms of

performance evidence. The exam

boards now need to train people in how to write and use exam papers. In ~4 months.

Good luck with that.

we propose that, in the majority of

circumstances, greater weight should be given to evidence of a student’s

performance that is closer to the time of the final assessment.

Does this mean that greater weight is given to topics that

were studied closer to the end of Yr 11, or that greater weight is given to examining

that happens closer to the end of Yr 11?

If the latter, then the easiest way to do this is to have exams.

We are also aware that it may be

more difficult to draw on wider evidence for students whose education has been

most disrupted and ask you bear this in mind when answering the questions here

and in the equality impact assessment section.

Question 20.To what extent do you

agree or disagree that a breadth of evidence should inform teachers’

judgements?

I know that this will be tempting for lots of people. There are those who believe other evidence

ought to be part of assessing final grades, alongside exams. While I think it is reasonable to hold this position,

I worry that some will see that supporting this question will help to usher

that in. At the risk of repetition:

there isn’t time to put this in place properly this year, it will be applied

unevenly, and the pupils who will be disadvantaged by this will be students who

already face disadvantage. Please don’t

support this question just because you are ideologically in favour of it: the

practicalities mean it will be a nightmare this year.

5 The assessment period

If schools and colleges use exam-board-provided

papers or create their own, we propose they should be used by teachers within a

set period of time. If students who are completing the papers do so at different

times there is a risk that students taking the papers later in the window might

be at an advantage, particularly if the content of the papers is leaked.

I love the optimistic use of “if” here. I would replace it with “when the content of

the papers is leaked”. In a normal year,

the day after a maths paper is sat there are videos showing worked answers on

YouTube and threads about it on The Student Room. This means schools can’t use those papers as

mock exams the following year and is a source of frustration for many. Of course the same thing will happen here.

This risk could be reduced by: (a)

the exam boards creating a menu of papers from which teachers would choose. The

papers could be deliberately published shortly before the assessment window

opened, although students would not know which one(s) they would be required to

complete

By “published” does it mean that they would be made openly

available to everyone, including students?

Those with access to additional teaching, private tutoring, etc, will be

able to go through these. And they will

appear as worked answers on social media.

Or does it mean that schools will only be able to see the papers (which

will still be kept away from students) a short while before choosing the

questions they want their students to answer?

That will lead to a mad scramble just before the exams. What if the teachers decide the questions

aren’t appropriate for their students at that late date? Do they then begin to write their own paper?

(b) all students completing the

papers for a particular subject within a certain time frame—and we are seeking

views on how long that should be It is important to consider what would happen

if the course of the pandemic is such that papers cannot safely be sat within a

school or college (see the next section). Following this consultation, the exam

boards will seek views from schools and colleges on the dates between which the

papers for each subject can be used by teachers. We propose that, in the

interests of fairness and consistency, students assessed with and without the

use of the exam board papers should be assessed as late as possible in the

academic year.

If there is an assessment window, what is the benefit to

schools of having their students do the exam early in that window? Some will want to defer them as late as possible

to allow for more lessons to take place. And it will be extremely difficult to police

leaks of papers and who might have seen them.

We shouldn’t forget that students will be taking their usual

subjects. For Yr 11 that might be 9 or

10 subjects. If there is only one exam

in each subject these still needs to be spread out and still requires an exam

timetable to be created (add that to the list of things for every school to do separately:

create an exam timetable) except instead of having the usual 6-week-long

exam-board produced timetable, it all needs to happen in a shorter timeframe. More exams in each subject would make this

more challenging to do, fewer would make the results less reliable.

7 Supporting teachers

Question: 31.To what extent do you

agree or disagree that the exam boards should provide support and information

to schools and colleges to help them meet the assessment requirements?

Will anyone disagree with this?

9 External quality assurance

We propose that the exam boards

should quality assure the approach taken by each school and college and that

the exam boards should work together, where appropriate, to make sure their

approaches are both consistent and do not impose unnecessary burden on schools

and colleges.

_All_ of this is an unnecessary

burden on schools and colleges that is being caused by the decision to

cancel exams.

We propose that the exam boards

should engage with every school or college to consider the approach it is

taking. Will this be on a subject

basis or a centre basis? If the former,

will exam boards have enough capacity to do this? If the latter, does that mean the exact same

approach will have to be taken across every subject?

All schools and colleges, regardless

of cohort size, geography, demographic or centre type could be subject to

further checks. We would also expect the exam boards to target some of their

quality assurance activities. They might, for example, spend more time with a

new school or college verifying that compliant processes are in place,

This process is a brand new one. No-one has done it before. _All_ schools and colleges will be ‘new’ to

doing this.

10 How students could appeal

their grade

We propose that the appeal should be

considered by a competent person appointed by the school or college, who had

not been involved with the original assessment – this could be another teacher

in the school or college or a teacher from another school or college.

This is a very odd way of doing things. The person considering an appeal will be

chosen by the school, and could even be a colleague of the teacher who made the

original judgement.

an appeal to the exam board would be

on the grounds that the school or college had not acted in line with the exam

board’s procedural requirements, either when assessing the standard at which

the student was performing or when considering the student’s appeal. A student

could not appeal to the exam board on the basis that either the teacher

assessment or the appeal decision was not a reasonable exercise of academic

judgment where the correct procedure had been followed.

How will students know that the procedural requirements

haven’t been followed? Presumably this

will require every school to publish detail of what they have done and what

evidence they have used? Is that a

sensible use of time?

To relieve pressure on the appeals

process, we are seeking views on whether results day(s) in 2021 should be

brought forward as this could be of benefit to students, schools and colleges

and further and higher education providers. Students should only be issued with

their result once external quality assurance by the exam boards has been

completed. If there is an appetite for results to be brought forward we will

consider the interaction between the timing of students receiving their results

and the results becoming formal for the purpose of university admissions,

working with the further and higher education sector to ensure no delay to

existing admissions timelines. We propose that the exam boards should publish

information for use by schools and colleges on how to deal with appeals.

Does this mean appeals would happen during the summer

holiday? Would teachers need to be on-hand

to respond during that time?

11 Private candidates

We want to build into the approach

opportunities for private candidates (for example students studying

independently, and home educated students) to be awarded grades in summer 2021.

The proposals give four suggestions, of which the third is:

(c) for the exam boards to run

normal exams for private candidates to take in the summer of 2021 – appropriate

venues would need to be provided.

This suggests that exams haven’t been “cancelled” then. The only thing that would be different here

from having _all_ students sitting exams is the number of students who are

involved. If it’s deemed fair for us to

consider in this consultation that private candidates might sit exams, surely

it’s fair for us to be asked whether _everyone_ should be allowed to sit

exams? Question 58 says:

58.If the preferred option for

private candidates is an exam series, should any other students be permitted to

enter to also sit an exam?

Yes! All of them!

Impact on schools and colleges

We expect there would be one-off,

direct costs and administrative burdens to schools and colleges associated with

the following activities: • familiarisation with information and guidance from

exam boards on teacher assessment and submitted grades • communication and

training from senior leaders to teaching staff on teacher assessment and submitted

grades • marking and quality assurance of teacher assessments and submitted

grades • amendments to centre systems to enable the required information to be

gathered and submitted to exam boards in a format specified by them • managing

high volumes of enquiries from candidates and parents • managing potentially

high volumes of appeals

Of these, which will fall on teaching staff and senior

leaders in school? Most of them. And even if exams officers make amendments to

centre systems and are the first contact for enquiries from candidates and

parents, teaching staff will need to learn how to use the centre system, will need

to enter data they didn’t used to have to input (because exams were marked externally)

and will need to respond to at least some of the queries.

But it’s OK, because there are things schools won’t need to

do that will balance this out:

Schools and colleges will be

delivering the alternative arrangements in place of, and not in addition to,

the usual range of activity required to deliver summer exams in their centre

including, for example, secure handling of exam papers and scripts,

invigilation of exams and dealing with any cases of possible malpractice and

maladministration arising in usual exam delivery.

Let’s look at these in turn:

secure handling of exam papers and

scripts

This will still need to happen to maintain integrity. This is a task that wasn’t carried out by

senior leaders and teachers but by exams officers.

invigilation of exams

Teachers aren’t allowed to invigilate exams; external people

are paid to do this in a normal year. In

fact, who will be doing that this year, when the exam papers are sat in

school? There will _have_ to be

invigilation happening in any case.

dealing with any cases of possible

malpractice and maladministration arising in usual exam delivery

This will also still need to be done (and would have been carried

out by the exams officers and not by teachers in any case).

So this section lays out the vast extra work that teachers

and senior leaders will have, countered by things that are either a mirage, or

which would have been done by non-teaching members of staff anyway.

We acknowledge that the burden of

delivering the revised arrangements could be greater and more challenging for

both exam boards and centres where staff availability is affected by the

coronavirus (COVID-19) and centres are closed for normal teaching.

This one hadn’t occurred to me. If there is a short timescale for exam board

papers to opened and for decisions to be made as to which parts to use, if

teachers are unwell then that will mess things up. Normal exams could be invigilated as usual,

with perhaps the need to recruit more invigilators if there is illness within the

group, because no specialist subject knowledge or knowledge of the students is

required to carry out the invigilation process.

We also acknowledge the exceptional

impact of the coronavirus (COVID-19) pandemic on the workload of teachers and

their colleagues.

I am pleased to see this written down, but these proposals

suggest it has then been entirely ignored.

Teachers and senior leaders are being asked to do an intolerably large number

of additional duties.

Impact on students

Students taking the relevant

qualifications are directly affected by the proposed arrangements. We are

focused on making sure they are not disadvantaged and that disruption to their

planned progression is minimised.

It is right that students should not be disadvantaged and

should not be disrupted. Unfortunately

these proposals don’t achieve this goal as well as exams would. We should be asked for our thoughts on

ordinary exams as well.

Summary:

What has been suggested here is vastly less good than having

exams and tweaking how those are organised and managed.

Here are the downsides of these proposals:

·

Huge extra workload for teachers and senior

leaders with the need to:

o

Write/choose exam questions

o

Justify the choices

o

Be trained in how to assess students by non-exam

means

o

Set and mark the exam questions

o

Decide on the grade for each student

·

Teachers will need to do all of this with a period

of about 4 months, while teaching full-time and remotely, in a pandemic.

·

Anxiety amongst students who have been preparing

for one system and who will now have to change to something new, something that

neither they nor their teachers have experience of.

·

The fact that exam boards and teachers will have

to do all of this at speed.

·

Disadvantaged pupils are likely to be more

disadvantaged.

·

Despite best efforts, it won’t be moderated in a

fair way across schools; there will be huge differences in the questions that

are used, in the way additional evidence is taken into account. It won’t be fair and it won’t be seen to be

fair.

·

Exam questions will be leaked, they will make it

into the public domain, and this will give some students an unfair advantage.

·

There is chance in play here: if students

happened to do a mock exam in December then that might be usable, but cancelled

January mocks are not.

How can we deal with these problems?

This answer to this is boring and predictable: having exams

as usual will deal with every single issue listed above.

Here are the downsides of having exams as usual?

1.

In different schools the teaching provision

during lockdown has been different and not all of the syllabus can be covered

in full.

2.

Disadvantaged students are likely to have

suffered disproportionately (perhaps because of a lack of access to wifi, IT

equipment, a place to work, equipment for art, etc).

3.

Schools might not be able to be open on a particular

date in June for students to come in to sit their exams, or certain students

might be having to self-isolate at the time of an exam.

How can we deal with these problems?

It is reasonable to say that if I am disagreeing with one

set of proposals I should come up with an alternative, so here are some

comments on those problems caused by having exams as usual:

1&2) Additional resource needs to be put in place in

schools that have been more disadvantaged by lockdowns and for students who

have been more affected. If Yr 11 and Yr

13 have been affected in some schools more than others, then so will Yr 10, and

Yr 7, and Yr 5, etc. Changing the entire

exam system for this one year won’t help those other year groups and doesn’t

deal with the underlying issue. Special consideration

could be applied by exam boards, with schools asked to say which students are

likely to have been disadvantaged, either because of access to equipment or by additional

periods of self-isolation.

3) This might be the case for the new system that is proposed,

and the solution for that can be used for ordinary exams.

It seems far more sensible to tweak the usual exams rather

than throwing them out (and replacing them with different exams). These tweaks could be different in each

subject. For example, in GCSE maths

there are currently 3 exam papers, all of which examine the majority of the curriculum.

Removing one paper would not be a major issue and would save on exam-time (although

it wouldn’t sort out lack of coverage of the curriculum). If there are distinct topic areas within

exams (this might be more likely at A-level) then it could be feasible for

students to choose say 2 out of the 3 topics to answer questions on. Alternatively, in all examined subjects the

topic for each question could be made explicit and schools could declare in advance

which topics have been covered in their school, with those that aren’t being

ignored for those students (with a minimum requirement in place). I think my preferred suggestion would be for exam

papers to be set as usual and for the lowest-performing 20% of questions to be

ignored for each student, so it won’t matter that they have only covered 80% of

the content of the exam.

Is this perfect?

No. Is it better than the

proposed system? Yes! And will take vastly less time, energy and

resource to put in place: time, energy and resource that could be diverted to

the students who need it most.

A plea

I have strong views on this.

If you do too, then please take part in the consultation (whether you

agree with me or not!). I will be using

the ‘any other comments’ questions to say how much I disagree with the approach

being taken.

Please read the full consultation document first and then

reply to the consultation. The link for

both of these is here: https://www.gov.uk/government/consultations/consultation-on-how-gcse-as-and-a-level-grades-should-be-awarded-in-summer-2021

The deadline is Friday 29 January 2021 .